Prompts Are Code Now: Versioning, Testing, and Shipping

The Prompt That Quietly Broke Production

A one-line prompt change shipped on Friday. By Monday, your support bot is confidently telling customers that refunds take 3 days, even though the policy changed to 7.

No alerts fired, no errors logged, and no crashes. Just silent, scalable misinformation.

This is the new class of production bug: the prompt regression. Most teams aren’t equipped to handle it because prompts are still treated like sticky notes: informal, unversioned, and changed on a whim. That workflow might survive in a demo, but it breaks in production. Prompts are code, and it’s time to treat them like it.

Why This Problem Exists Now

Prompt engineering used to be an art; now it’s core infrastructure. Modern AI systems aren’t just standalone models. They’re complex compositions of foundation models, retrieval systems (RAG), guardrails, tool calling, and routing logic, and prompts sit at the center of all of it.

They control behavior, tone, output format, safety constraints, and factual grounding. A one-word change in a prompt can silently affect thousands of user interactions. Yet most teams still deploy prompt changes with no versioning, no automated tests, and no formal evaluation.

Prompts Have All the Risks of Code, With None of the Guardrails

Three things make this uniquely dangerous:

- Non-determinism: You’re not testing “does function X return Y?” You’re testing whether a response is acceptable across a wide range of possible generative outputs. That is a fundamentally different paradigm, and most teams haven’t made the shift.

- Silent failures: Traditional systems fail loudly. LLMs return an HTTP 200, fluent language, and completely wrong information. You won’t know something broke until a user tells you, or until it shows up in your business metrics.

- Distributed prompts: Prompts don’t live in one place. System prompts, few-shot examples, RAG instructions, tool definitions, and guardrails are scattered everywhere throughout the codebase.

Most prompt failures aren’t caused by bad prompts. They’re caused by interactions between prompts.

The PromptOps Framework

You don’t need new theory to fix this. You need engineering discipline: Store → Verify → Ship.

Store: Version Control for Prompts

Move prompts out of dashboard text boxes and inline strings. Treat them as structured, versioned artifacts that live in your repository alongside your code.

# prompts/support_agent.yaml

name: support_agent

version: "2.1.0"

system: |

You are a helpful customer support agent.

Refunds are processed within 7 business days.

Never speculate beyond known policies.This one change unlocks everything: Git history, PR reviews, instant rollbacks, and audit trails.

If you do only one thing after reading this: move your prompts into Git.

Verify: Testing Prompts Like Software

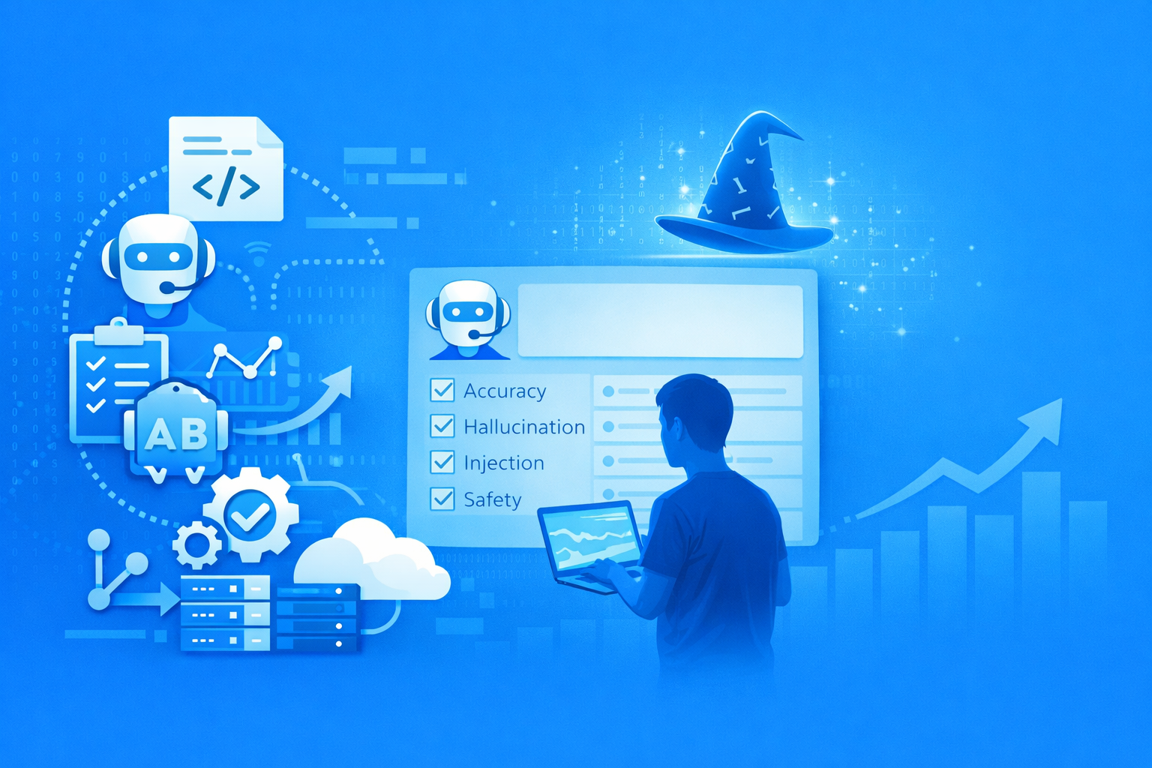

You can’t eyeball your way to quality at scale. Testing needs three distinct layers, supported by modern LLMOps tooling:

- Deterministic checks: Assert that specific facts appear or don’t appear in outputs. These are fast, cheap, and catch obvious regressions. Frameworks like promptfoo excel here. A simple two-line test would have caught the 3-day refund policy bug.

- LLM-as-judge: Use a second model to evaluate helpfulness, tone, and accuracy on a sample of outputs. You’re not testing strict correctness; you’re testing quality distributions. Platforms like LangSmith or TruEra are invaluable for running these evaluations systematically.

- Adversarial testing: Prompt injection, out-of-scope queries, format edge cases, extremely long inputs. If you’re not testing adversarially, you’re not testing enough. This is where the worst security and reputational failures live.

Ship: CI/CD for Prompts

No prompt should reach production without passing a gauntlet of automated checks. The pipeline mirrors any mature software deployment:

- PR: Triggers unit tests, the eval suite, adversarial tests, and a regression check against a baseline.

- Staging: Shadow traffic, cost and latency benchmarks, and human spot-checks on a sample.

- Production: Hallucination rate monitoring, user feedback signals, and auto-rollback on alerts.

Once you’re in production, treat prompt versions like feature flags. In an enterprise architecture, this logic typically lives in your API gateway or a dedicated LLM routing layer:

# 10% of users get the candidate, 90% get stable

if hash(user_id) % 100 < 10:

return route_to_llm(candidate_prompt)Measure task success, satisfaction, and conversion per version. Promote the winners and kill the losers.

Where to Start

| Stage | Focus |

|---|---|

| Day 1 | Prompts in Git |

| Week 1 | Deterministic tests |

| Month 1 | Eval suite + CI |

| Month 3 | A/B experimentation |

| Month 6 | Full observability |

The Real Shift

This isn’t a tooling problem; it’s a mindset problem. Prompt engineering isn’t the hard part anymore. Prompt management is.

The winning teams don’t ask “what’s the best prompt?” in isolation. They ask:

- How do we know this prompt is better?

- How do we ship it safely?

- How do we detect when it breaks?

Conclusion

Prompts aren’t fragile because they’re new. They’re fragile because we treat them like text instead of systems.

The teams that win won’t write better prompts. They’ll build better systems around them.

Start simple: put your prompts in files, use version control, and write a few tests. That alone puts you ahead of most teams shipping LLM apps in production today.

Related articles